Reaction: Batch Processing Isn’t Dead—It’s Just Quietly Running the World

Batch processing doesn’t sound sexy.

Let’s address the elephant in the room:

Batch processing doesn’t sound sexy.

No real-time dashboards.

No flashy “live updates.”

No “AI-powered streaming pipeline” buzzwords.

And yet…

👉 Batch processing is still doing most of the heavy lifting behind the scenes.

Chapter 10 is a reminder that while everyone obsesses over real-time systems,

batch jobs are the ones actually getting things done.

🧠 The Core Idea

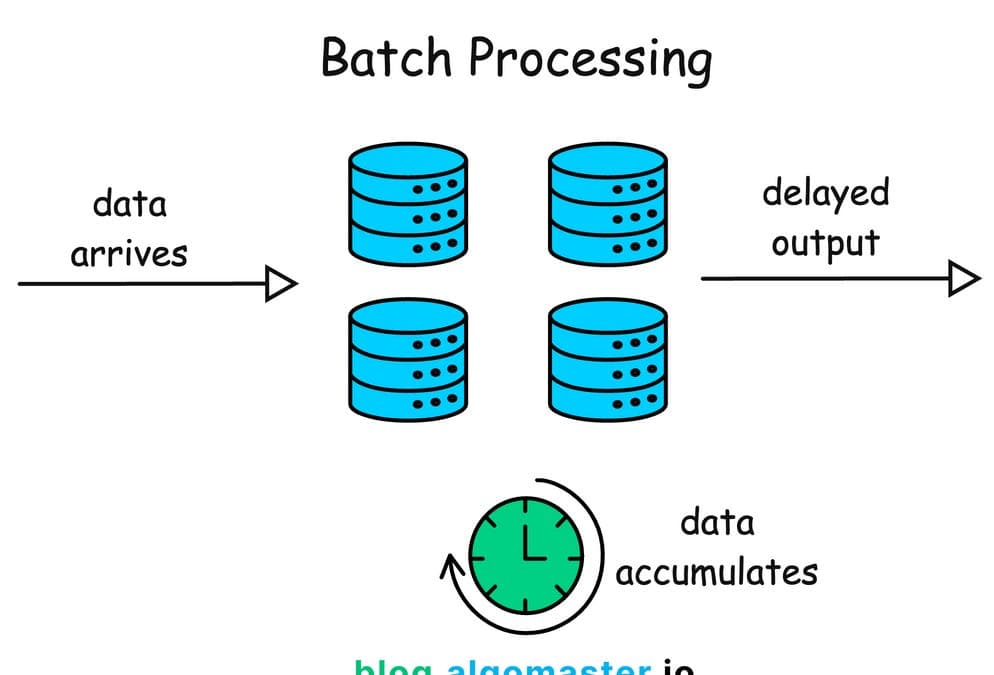

Batch processing is about:

👉 processing large volumes of data efficiently, not instantly

You:

collect data

process it later

produce results

No rush. No drama. Just results.

⏳ Why Batch Still Matters

Real-time systems are great… until they’re not.

They:

cost more

are harder to maintain

introduce complexity

Batch processing, on the other hand:

is predictable

easier to debug

handles massive data efficiently

Sometimes:

“fast enough” beats “real-time” every single time.

🧩 Batch vs Stream (The Ongoing Debate)

Streaming fans will say:

“Everything should be real-time.”

Batch systems respond:

“Relax. Do you really need it now?”

Batch Processing

high throughput

efficient

delayed results

Stream Processing

low latency

real-time updates

more complex

💡 Reality Check

Most systems don’t pick one.

They combine both:

👉 Lambda-ish architectures

batch → correctness

stream → freshness

Because:

users want fast and accurate

(Yes, they want everything. Of course they do.)

🗂 The Power of Immutable Data

One of the most underrated ideas in this chapter:

Treat data as immutable.

Instead of:

updating records

You:

append new records

Why this works:

easier debugging

safer reprocessing

reproducible results

Basically:

👉 logs > mutations

🔁 Reprocessing Is a Superpower

Batch systems shine because they can:

👉 recompute everything

Made a mistake?

fix the code

rerun the job

Try doing that in a real-time system without sweating.

🧠 MapReduce (The OG Workhorse)

Before all the fancy tools:

👉 there was MapReduce

Simple idea:

Map → process chunks of data

Reduce → aggregate results

It’s not glamorous, but it works.

And honestly?

A lot of modern systems are just:

👉 MapReduce with better marketing

⚙️ Dataflow Pipelines

Batch processing evolved into:

DAG-based pipelines

distributed jobs

fault-tolerant execution

Systems like:

Hadoop

Spark

They handle:

parallel processing

retries

failures

So you don’t have to babysit jobs at 3AM (hopefully).

🧨 Failure Handling (Where Batch Wins)

In batch systems:

failures are expected

jobs are retryable

results are reproducible

Compare that to real-time systems:

👉 where failures can corrupt live state

Batch is like:

“We’ll just rerun it.”

Simple. Effective. Underrated.

🧠 Locality Matters

Another subtle but important idea:

Move computation to data—not data to computation.

Why?

moving data is expensive

processing locally is faster

This is why distributed systems:

👉 schedule tasks near where data lives

😂 Brutal Truth Section

Let’s be honest:

Real-time systems get the hype

Batch systems get the paycheck

Your dashboards might be real-time…

but your analytics, reports, and ML pipelines?

👉 Batch all the way.

🧠 Final Takeaways

Batch processing is about throughput, not latency

It’s simpler, more reliable, and easier to debug

Immutable data + reprocessing = powerful combo

Most real systems use both batch and streaming

“Real-time everything” is often unnecessary overkill

🔥 The Big Idea

Batch processing is not outdated.

It’s the foundation that real-time systems stand on.

🚀 Closing Thought

Not everything needs to be instant.

Sometimes, the smartest system is the one that says:

“Let’s process this later—and do it right.”